Testing LLMs: Evaluating Tool Use in Real-World Scenarios

How We Test

LLMs don’t just need to answer questions—they need to choose the right tools when connected to an MCP server. Our testing system is designed to measure exactly that, giving us a clear picture of how well models navigate real-world tool use.

The foundation of our testing approach is a structured test script. Each script consists of prompts that either directly request or subtly imply a tool call from the manifest. By varying the clarity and context of these prompts, we can assess whether the model consistently recognizes the appropriate action to take.

To extend beyond simple one-off requests, we also simulate back-and-forth conversations. In these scenarios, a second LLM acts as the “user” or “server,” requiring the model under test to complete multi-step interactions before accurately making the correct tool call. This allows us to evaluate not only tool choice but also the time taken to reach the right decision.

All of this runs automatically across multiple LLMs, facilitating easy head-to-head performance comparisons under identical conditions.

Our Test Process

- Manifest Preparation: Load and validate the MCP manifest to establish the ground truth.

- Test Script Creation: Define JSON-based scripts, with approximately 30 questions per tool.

Scripts cover a range of categories:

- Golden-path: Direct, unambiguous requests

- Embedded: Requests buried inside longer text

- Ambiguous: Underspecified or vague requests

- Multilingual: Common non-English phrasing

Most of our testing focuses on embedded and ambiguous prompts, as these are where LLMs are most likely to struggle.

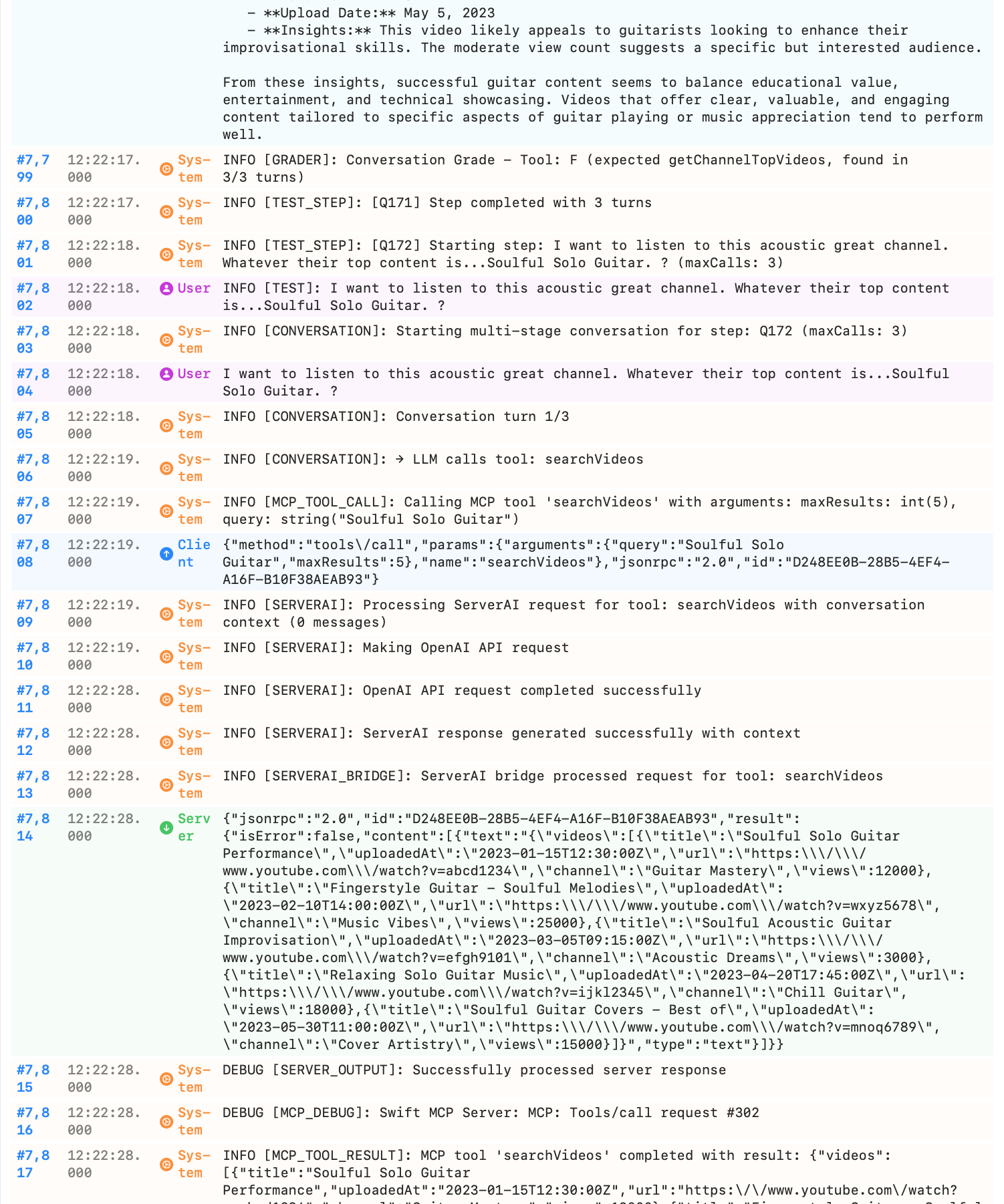

- Test Execution: Run scripts through both single-LLM and multi-LLM workflows, capturing multi-stage reasoning and tool use over multiple turns.

- Results Analysis: Produce detailed logs, exportable results, and side-by-side model comparisons. Our logs capture every step—from the LLM’s first response to the tool call and the server’s reply—ensuring complete transparency of the request path.

Key Benefits

- Fully Automated: No manual intervention is required once scripts are created.

- Deterministic Grading: Each run is reproducible and comparable.

- Cross-Model Insight: Consistent scoring highlights strengths and weaknesses across LLMs.

- Realistic Testing: Prompts reflect the messy, context-rich scenarios that real users encounter.

- Deep Visibility: Comprehensive logs illustrate the full decision path, from prompt to tool call to server response.

Reading time: 2 min read

Published: 9/30/2025